I like to focus on LTE capacity in the next few blog entries and present what can realistically be obtained. I have seen wild figures, mainly pushed by system vendors and consumed by many operators, journalist and writers who like to wow readers of the promise of new technologies. For network operators, erring on capacity expectations has negative consequences as capacity fundamentally impact the cost of the network both on the access side and the backhaul side. Inflated capacity figures would lead to under-dimensioning on the access side and over-dimensioning on the backhaul side. So, for example, if we think LTE cell will provide 100 Mbps of throughput while in reality can only do 50 Mbps, the operator will be short by 50% of capacity in the access network resulting in poor user experience (e.g. slow download, blocking, etc.) and will be 50% over the required capacity for backhaul in which case it’s investment in capacity that’s sitting idle. This is why it is important to get capacity expectations right.

In this blog, I will look at the peak capacity of LTE. This is the maximum possible capacity which in reality can only be achieved in lab conditions. To understand the calculations below, one needs to be familiar with the technology (I will provide references at the end). But for now, let’s assume a 2×5 MHz LTE system. We first calculate the number of resource elements (RE) in a subframe (a subframe is 1 msec):

12 Subcarriers x 7 OFDMA Symbols x 25 Resource Blocks x 2 slots = 4,200 REs

Then we calculate the data rate assuming 64 QAM with no coding (64QAM is the highest modulation for downlink LTE):

6 bits per 64QAM symbol x 4,200 Res / 1 msec = 25.2 Mbps

The MIMO data rate is then 2 x 25.2 = 50.4 Mbps. We now have to subtract the overhead related to control signaling such as PDCCH and PBCH channels, reference & synchronization signals, and coding. These are estimated as follows:

- PDCCH channel can take 1 to 3 symbols out of 14 in a subframe. Assuming that on average it is 2.5 symbols, the amount of overhead due to PDCCH becomes 2.5/14 = 17.86 %.

- Downlink RS signal uses 4 symbols in every third subcarrier resulting in 16/336 = 4.76% overhead for 2×2 MIMO configuration

- The other channels (PSS, SSS, PBCH, PCFICH, PHICH) added together amount to ~2.6% of overhead

The total approximate overhead for the 5 MHz channel is 17.86% + 4.76% + 2.6% = 25.22%.

The peak data rate is then 0.75 x 50.4 Mbps = 37.8 Mbps.

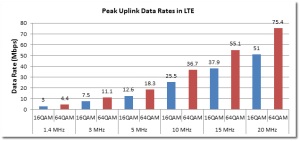

Note that the uplink would have lower throughput because the modulation scheme for most device classes is 16QAM in SISO mode only.

There is another technique to calculate the peak capacity which I include here as well for a 2×20 MHz LTE system with 4×4 MIMO configuration and 64QAM code rate 1:

Downlink data rate:

- Pilot overhead (4 Tx antennas) = 14.29%

- Common channel overhead (adequate to serve 1 UE/subframe) = 10%

- CP overhead = 6.66%

- Guard band overhead = 10%

Downlink data rate = 4 x 6 bps/Hz x 20 MHz x (1-14.29%) x (1-10%) x (1-6.66%) x (1-10%) = 298 Mbps.

Uplink data rate:

1 Tx antenna (no MIMO), 64 QAM code rate 1 (Note that typical UEs can support only 16QAM)

- Pilot overhead = 14.3%

- Random access overhead = 0.625%

- CP overhead = 6.66%

- Guard band overhead = 10%

Uplink data rate = 1 * 6 bps/Hz x 20 MHz x (1-14.29%) x (1-0.625%) x (1-6.66%) x (1-10%) = 82 Mbps.

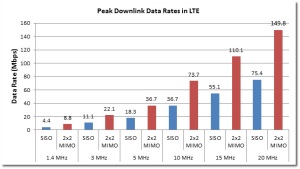

Alternative to these methods, one can refer to 3GPP document 36.213, Table 7.1.7.1-1, Table 7.1.7.2.1-1 and Table 7.1.7.2.2-1 for more accurate calculations of capacity. I have used these tables to generate the figures below for LTE peak capacity.

To conclude, the LTE capacity depends on the following:

- Channel bandwidth

- Network loading: number of subscribers in a cell which impacts the overhead

- The configuration & capability of the system: whether it’s 2×2 MIMO, SISO, and the MCS scheme.

I will address the issue of average capacity in my next blog entry. But for now, those interested in dig a little deeper on how the background for the above calculations can refer to my LTE white paper series posted at:

excelent fundametal to calculate capacity…

a doubt

In formula 6 bits per 64QAM symbol x 4,200 Res / 1 msec = 25.2 Mbps

how we consider 6 bit….??

64 QAM symbol contains 6 bits: 2^6 = 64 symbols.

Similarly 16 QAM symbol has 4 bits: 2^4 = 16 symbols, and so on to other orders of QAM modulation.

hello nice tutorial but if we have Downlink shared channel DSH i want to calculate throughput of LTE downlink system for SISO mode, transmit diversity MIMO 2×1 ,transmit diversity MIMO 4×2, open loop spatial multiplexing MIMO 4×2 antenna system plz give some detailed analysis for 1.4 MHZ bandwidth calculation of throughput i really appreciate that.

The calculations are for two stream MIMO/Spatial Multiplexing. For 2×1 MIMO, there is no capacity gain (i.e. no spatial multiplexing) and this becomes equivalent to SISO channel capacity (in practice, there is gain because of reduction in fading due to transmit diversity). For 4×2 MIMO theoretically the channel capacity is the same as 2×2. 1.4 MHz channel would have a slightly higher percentage of overhead. On the whole, you can scale the capacity based on these ‘rules of thumb’.

Hi, in the last method where it is suggested to use the 3GPP document 36.213 tables for accurate results, do these give the maximum capacity including the overhead? Or should the overhead be taken into consideration even when using these tables? Thank you

The tables account for overhead.

I have another question, if you don’t mind clarifying please. The calculation of the Downlink RS signal uses 4 symbols in every third subcarrier resulting in a total of 16. If SISO is considered then only 8 RSs in a resource block are considered. Is that correct?

Going back to the example above: does that mean that only 16 RSs are used in the total 336 subcarriers? For instance, if we have 4 RSs in every third subcarrier (considering 2×2 MIMO), then for a total of 336 subcarriers, don’t we have (336/3=112) instances where we have 4RSs? Or is this the wrong way to look at it? Thank you.

Otherwise I think it can be calculate as: (SISO): 12*7 = 84, ans 4 out of those 84 are used for RS, which gives (4/84)* 100% = 4.7% as well…. Clearer reasoning I think

Hi,

Please clarify for me, 1 RE = 1bit ?

How do you relate number of RE to the speed in b/s ?

How do you relate number of users to number of ressource elements ? to number of PRB ?

Best regards

One RE is one subcarrier – it can be modulated accordingly, QPSK-64QAM. The rate will depend on the modulation(e.g. QPSK is 2bps/Hz). The users are scheduled on PRBs which is a MAC layer function, but the more resources, the larger number of supported users.

thanks for your answer. i have another question, do you know how much people can be put in a frame?

This is a an implementation issue and is vendor dependent. The standard does not specify.

hello and thanks for a very resourceful explanation on “LTE peak capacity”

I have some question:

1. Say if a have a S111 Lte site @ 20Mhz, what would be the required backhaul capacity be for the site….assume transport is full IP?

2. Can I use Peak capacity calculation to work our backhaul capacity? please show me the procedure if it is different.

Thanks

The NGMN published whitepapers on LTE capacity guidelines as well as on backhaul. I suggest you look at those for the answer (http://www.ngmn.org/). As for the use of peak capacity for backhaul this is a matter of policy. Some operators do that to back up their marketing claims on LTE data rates. Others decide to oversubscribe the backhaul link.

It’s really an amazing article, I also checked your explaination on average data rate. I have a question, how will the number of users affect average data rate, is there any formular or model to show the relationship? Thank you very much.

I’m not aware of any specific formula for the relationship between average data rate and number of users. The way the cell loading impact capacity of the cell and overall data rates is largely dependent on vendor implementation because this is a MAC scheduling issue which is left to the vendors to optimize and develop their own differentiation.

Hi Frank,

How to calculate the no of RB in a given Bandwidth

The number of RBs is fixed and provided in the standard. It varies depending on the channel bandwidth.

thankx a lot for your reply

Hi Frank,

How does the enodeb knows abt the MME and in which message the enodeb tells the UE about the MME.

Hi Frank,

How does the enodeb knows abt the MME and in which message the enodeb tells the UE about the MME in the initial attach procedure..

Hi frank, thanks for your sizzling explanation;it has been a great and deeper understanding for me. but let me seek you help on this.NCA OF Ghana has licensed 3 companies to provide 4g services. each was allocated 15 mhz band. now knowing that LTE has channeled bandwidth from 1.4 to 20 mhz, my question is can they still provide service in the 20 mhz bandwidth channel band since they have 15 mhz each ? thanks ebenezer Annang

Annang, unfortunately, they cannot use 20 MHz if their allocation is only 15 MHz… The good news is that 15 MHz is a profile of LTE and they can use that. However, I have not heard anyone in practice doing this.

thanks Frank; i will seek some clarification with NCA on that.

sure , but the 2.6 ghz band has 50 mhz for use, so in essence if providing to 3 licensed companies it will have that distribution and a little left. what do you think?

The operators can deploy 5, 10, or 15 MHz (if the latter is supported by the equipment) in different configurations such as multicarrier or N=3 reuse plan…

correction in my band calculation for 2.6ghz; the total spectrum under the 2.6 ghz band is 70 bandwidth. the distribution for the licensed operators were 2×15 mhz, 2x15mhz and type 2 band of 1×30 .

need some clarifications. the effective channel bandwidth for 5 mhz is 4.5 considering the guard-band of 10% in the uplink. so, my question is to know whether was considered in The total approximate overhead as indicated has 17.86% + 4.76% + 2.6% = 25.22%. ? thank you

Yes, this was factors in by the number of resource blocks.

sorry the query is on download i should say , not up-link.

Hello Frank,

I have one question regarding Reference Signal Sequence in LTE,

In 3GPP TS 36.211 V8.9.0, Section 6.10, its given the sequence genration and the reference signal sequence is given by equation rl,n(m), where m=0,1,…,2*N_RB….

Why the range is given as 2*Number of resource block???

The allocations of resource blocks to users are in a factor of 2.

The uplink data rate is reduced by

■Pilot overhead = 14.3%

■Random access overhead = 0.625%

■CP overhead = 6.66%

■Guard band overhead = 10%

Seeing that Sounding RS is configurable, is it already withing one of the above percentages or do we put in the 7% additional should we design to include.

Thanks Frank,

If we have LTE 10 MHZ FDD and if we suppose that we have enough MW BW to achieve maximum Throughout….how much max throughput i can commit to get like 60% of the max which is around (42) from (73)..and why i can’t commit 100 % from the radio point of view .

and i saw 79 Mbps result of throughput test !is it possible?!

Thanks and Regards

AsA

Thank you Frank! This is very useful.

I have a question, why is the uplink data rate can only achieve SISO while downlink is MIMO? Is this related to the ue category of our handsets?

Thanks.

Derek, yes, the UE categories define the supported features and typically to save on power in the handset only one antenna transmits.

Frank

Thanks for the reply! I will look it up in the UE category info then 🙂

Frank, could you please illustrate how did you calculate the CAT4 uplink peak throughput (20MHz bandwidth) by using 36.213 table 8.6.1-1 and 7.1.7.2.1-1? 50Mbps uplink means MCS=23 is used, but the modulation order would be 6, 64QAM. CAT4 UE only supports up to 16 QAM, right?

Thanks

Eric, that’s correct, Cat4 UE only supports 16QAM on UL (MCS 20 if I recall correctly). The definition of Cat 4 is 51024 TB bits/TTI which is 51 Mbps (1 ms TTI). I think table 7.1.7.2.1-1 is for DL-PDSCH.

table 7.1.7.2.1-1 is for both DL-PDSCH and UL-PDSCH if you check the specs. My question is how could CAT4 phone reach 51Mbps for uplink?

You are correct – the table is for DL and UL. The only explanation I have now is that the coding rate must be close to 1. I did the exercise of accounting for the overhead on the UL and with 1 PUCCH, I got 51.3 Mbps. For 16QAM, the max throughput without any overhead is 67.2 Mbps. 51 Mbps means 24% overhead. 43 Mbps means 35% overhead which is probably too high.